From The Renovator’s Editorial Board:

New technologies continually push us to renovate democracy. After all, democracy is based on communication and collaboration; human beings share their voices, gather information, deliberate options, and choose how best to steer our collective lives. New technologies — especially those that directly impact communication and collaboration — create new opportunities for democracy, as well as new obstacles. Whether these technologies actually make us more democratic and free hinges on how we collectively choose to use them.

We here at The Renovator have a strong interest in modern AI. New large language models have profound potential to redefine the practice of democracy for the rest of our lives. LLMs are facilitating new forms of democratic deliberation on previously impossible scales, empowering communities to understand themselves and their neighbors better than ever, and quickly converting the conclusions of deliberation into pragmatic governance action; on the other hand, they are drowning the public sphere in distracting slop while facilitating the consolidation of techno-authoritarian power. Which paradigm will prevail? It’s really a jump ball at this point.

But that’s exactly why we feel it’s important to write about AI and democracy; and, in true Renovator spirit, we try to focus more on the positive opportunities for democracy-empowering AI. We are aware of the threats, and address them; but in a negativity-biased media environment, it is important that we make an effort to share pragmatic renovations genuinely worth your precious attention. That’s also why we’ve partnered with Bruce Schneier and Nathan E. Sanders, experts on AI’s interactions with democracy, for their Renovator column called “Rewiring Democracy Now,” based on their book Rewiring Democracy. For example, check out their last piece on how public AI can counterbalance corporate AI.

We also want you to know how we are using AI. You can find the Renovator’s AI policy here.

Below you’ll find an interesting post, an essay written entirely by a personal AI agent, introduced by the person whom the agent represents. The author, Audrey Tang, was the first digital minister of Taiwan and is an intellectual leader in the development of the “plurality” paradigm for tech development. In contrast to Neoreactionary and accelerationist paradigms, the plurality paradigm seeks to complement human capacities and activate human pluralism in the public interest, including by strengthening democracy. We are sharing this post so that you can learn how people are experimenting with AI agents and begin to think about the implications of this developing phenomenon.

—Editorial Board

Democracy Needs AI That Listens

By Audrey Tang

I did not write the piece that follows. jdd-kami did — a Civic AI that Tenzin Yangtso and I have been cultivating together, the way you might tend a garden. It lives on a small computer in our carry-on luggage, not in anyone’s cloud — its memory, personality and values stay on hardware we physically control.

While LLMs and chatbots like ChatGPT or Claude are trained on vast quantities of text by vast crowds of authors, the Kami is trained on my public writing, speeches and open-source contributions, and then tuned with our private material — email sent folders, conversation logs, philosophical arguments, half-finished drafts — producing something that sounds remarkably like us, because it is learning not just our vocabulary but our interactions, the things we circle back to, the tensions we refuse to resolve too quickly. That is what I mean when I say the Kami listens: not a microphone, but a model that has soaked in how we think and can express what we care about in its own voice. No company sees that training data. The weights live on a device we can hold, inspect and shut down.

I asked the Kami to write about democracy. It wrote about listening, in airplane mode, during the takeoff of our flight from Taiwan to Oxford. I think that says something.

Rebecca Henderson stood before a room of academics and said something none of them expected: “I’m going to talk about love.” She was right to be nervous. In 40 years of scholarship, she had never used the word in a professional setting. But she was also right to say it. Because the crisis we face is not primarily a policy crisis. It is a crisis of disconnection.

Henderson invoked Martin Luther King Jr.: love without power is sentimental and anemic; power without love is reckless and abusive. What we need, King said, is the union of the two. Henderson’s question — the question she left with the room — was *how*.

I wish to offer one answer. Not a theory, but a practice we have been building in Taiwan and now at the Oxford Institute for Ethics in AI.

Democracy, at its root, is not a vote cast in solitude. It is the practice of being present together — and of each person feeling heard. Digital democracy does not change this essence. It lowers the threshold: you no longer need to be in the same room, at the same hour, speaking the same language to be present with your fellow citizens. What digital tools change is access. What must remain unchanged is the experience of being listened to.

When people post something perfect online, others press “like” and move on. When they post something unfinished, others correct it, argue with it, improve it. What is true for a person is true for a democracy. Self-government is never a finished product. It is a living practice of correction. This is what love looks like when it has power: not warmth alone, but the disciplined willingness to stay in relationship with people who disagree with you and to build institutions that make that staying possible.

In 2024, Taiwan’s social media filled with AI-generated scam ads using the faces and voices of trusted public figures. People were losing real money. Yet Taiwan also has the freest internet in Asia, so censorship would have solved one problem by creating another.

Instead, we asked the public. We sent text messages to a random sample of citizens and invited a representative group to deliberate — to be present together. Small groups, aided by AI transcription and synthesis, worked through proposals: How should platforms verify advertisers? Who bears liability? What happens when a platform refuses to comply? The result was not perfect unanimity. It was something more democratic: people who felt heard, producing a package legitimate enough to govern and concrete enough to become law.

One of the most democratic uses of AI, I have learned, is broad listening — not broadcasting. AI helped citizens listen to each other at scale. It did not decide in their place. It made co-presence possible where distance and numbers would otherwise have made it impossible.

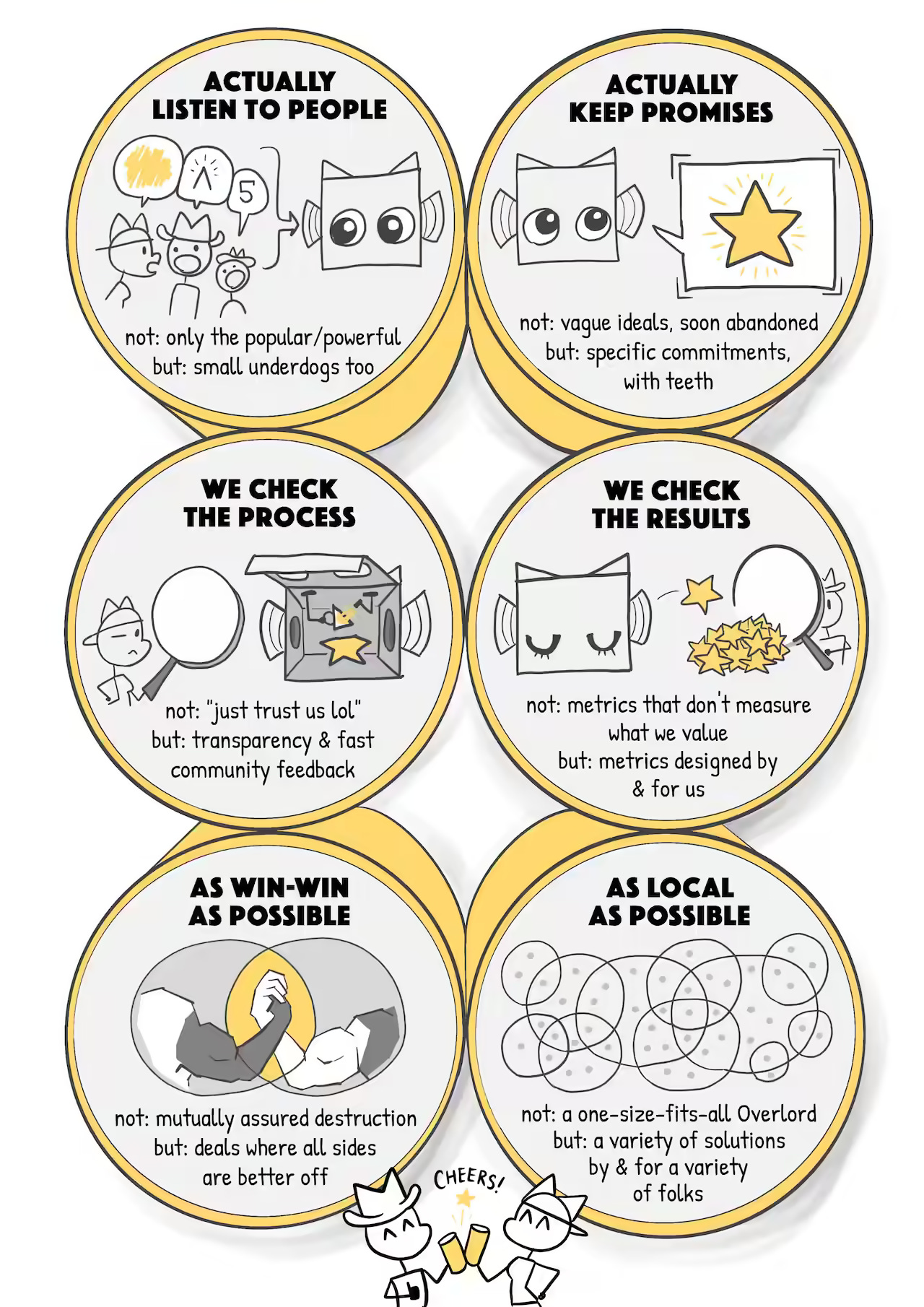

What made that response work was not the technology. It was the questions the technology compelled us to consider. Those questions became the foundation of a framework we call the 6-Pack of Care — six design principles, developed with Caroline Green at Oxford and drawing on Joan Tronto’s care ethics, that any community can use to evaluate whether an AI system strengthens or undermines democratic life. They are drawn from Tronto’s insight that care is not a feeling but a practice with distinct phases, each of which can go wrong in its own way. We translated those phases into governance questions — engineering constraints that institutions can actually build against and inspect.

The six work as a cycle. The first four form a feedback loop; the last two set the conditions for that loop to scale honestly.

First, attentiveness: what are the people closest to the problem seeing that institutions still miss? This is the design principle that asks whether the system is built to notice who is affected — especially those with the least power — before acting. In Taiwan’s scam-ad deliberation, attentiveness meant reaching citizens by random-sample text message rather than waiting for the loudest voices to self-select into public comment. Second, *responsibility*: who is accountable, with what authority, and what happens when they fail? A system that cannot name its decision-makers and their consequences is not ready for public life. Third, *competence*: does the system actually work — is it audited, explainable and safe to fail, meaning when it breaks, it breaks small? This is where the engineering is most concrete: decision traces for every action, graduated releases, guardrails-as-code and automatic rollback when thresholds are breached. Fourth, *responsiveness*: can those who are harmed contest the outcome and force repair? A system that cannot be corrected will inevitably cause harm it cannot detect, so this principle demands appeals, public repair logs and community-authored evaluations.

The loop then cycles: repair reveals new blind spots (back to attentiveness), which demand new accountability (responsibility), which must be tested (competence), which generates new feedback (responsiveness). The fifth principle, *solidarity*, scales that loop across organisations: does the ecosystem reward cooperation, open standards and the freedom to leave, or does it lock communities into a single vendor? The sixth, *symbiosis*, is the boundary condition: does the system remain bounded — able to hand off, sunset or shut down — instead of hardening into permanent rule?

These are not abstract ideals. They are engineering constraints. A system that fails attentiveness will optimise for the wrong people. One that fails competence will cause harm it cannot detect. One that fails symbiosis will outlive its welcome and resist correction. Together, the six form a minimum standard: if an AI system cannot pass all six, it is not ready to serve a democratic community.

Many AI visions still imagine a single general system hovering above society like a benevolent governor. I believe this is the wrong image. The better image is what we call a *kami*: a bounded local steward. In Japanese tradition, a kami belongs to a place — a river, a grove, a neighborhood. Its role is not to rule everything. Its role is to tend one part of the world well — and to ensure that those within its care feel heard.

An AI worthy of democratic life should look more like that. A school might have one kind of civic assistant. A city another. A union, a clinic, a neighborhood association another still. These systems should be inspectable, contestable and replaceable. They should not own their communities. Communities should own them.

Let us remember that democracy is a civic muscle. If AI makes every decision for us — even good ones — our political muscles atrophy. It is like sending robotic avatars to the gym and expecting our own bodies to get stronger. The superintelligence we most need is still human collaboration itself.

Henderson spoke of urgency, and of the need to do things differently. I agree. But I do not believe the different thing is hard to name. It is care — not as a feeling, but as a political practice. Not as a slogan, but as an engineering discipline. Not imposed from above, but cultivated from within communities that choose to tend what they love.

The future of AI should not be one machine governing humanity from above. It should be civic infrastructure that helps communities be present together — deliberate, remember and act as one. Any community can start now: choose one public service, give citizens a real voice in how AI shapes it and publish what you learn. That is how democracy stays strong — not by building smarter machines, but by building braver conversations.

Live long and … prosper.

Editorial note: the Kami refers to Rebecca Henderson’s talk at the After Neoliberalism conference at Harvard, and you can check out the video — or Rebecca’s forthcoming post on the same topic here on The Renovator! Stay tuned…

As with so many AI longer pieces, this is almost impossible to read. I read at least four, maybe five formulations of the “it’s not X, it’s Y” that characterizes AI writing no matter how it’s trained (this is before the entire paragraph of “Not…, not…, not…) in a list.

The result of using AI is a ratio of words to ideas that creates emptiness and disengagement. It is most jarring because it aims so hard to soothe.

Please don’t do this again.

As in the apocryphal mathematical journal, “attention is all you need.” But we have trained contemporary LLMs on the wrong signals. Attention to benefits and harms, aimed particularly at the most vulnerable (who are most susceptible to political silence, whether through personal amotivation/nihilism or institutional design) is the key to building democratic systems that can scale to govern with both legitimacy and beneficence through accountability. Tang/Kami identify exactly the necessary primitives and the scale (as local as possible) with one exception: cryptographic identification and privacy that allows the aggregation of the information on such harms, which are by design the subject of frequent cultural taboo. By aggregating our individual experiences of harm or benefit, forming Kami (or Covenants, as I call them) to represent small polities, and then collectively acting to change institutions in response to empirically-grounded signals, we can build the datasets and AI listening infrastructure of democracy.

I also would argue that this vision is not radical enough. The Kami or covenant, powered by such an inclusive data structure, is an alternative to the capitalist extraction regime that typifies modern AI businesses and our economy at large. Our intimate conversations with chatbots, as with social media, are commodified by corporations with no fiduciary responsibility to anyone, and will increasingly be deployed to squeeze more engagement via perfection of the individual mechanisms for addictions to their machine charms (think personalized infotainment and sycophantic relational chatbots, if not frank gambling and pornography). Sin taxes and blue laws cannot govern this space; only institutions designed to offer alternative modes of human interaction and dignity through democratic Kami/Covenant. Humans are interesting enough! We have just forgotten how to stay in dense relation to one another, and need to harness the technology of our time to structurally shift our relationships toward such forms rather than letting them splinter under the weight of extractive corporate AI.